Fast and lightweight

Image stablisation, environmental mapping

Portable

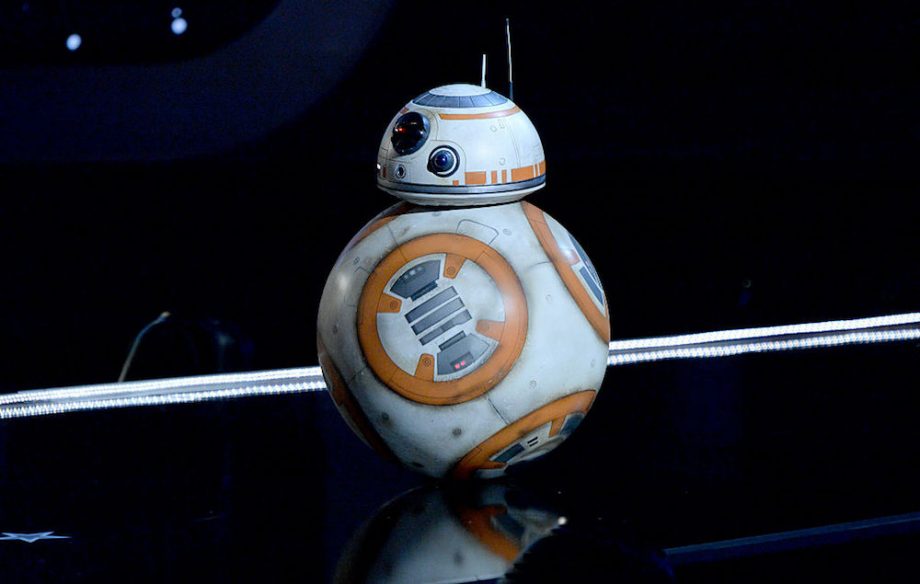

Avaiable to use on different type of robot: Legged, Wheeled, and drones

Human interface

Display in mobile app

AUTO-NAVIGATION is the future

Auto-navigation car is the latest trend of machine learning application, no matter the big name internet companies like Google and Apple or large car manufacturers such as General Motors, Tesla and Totora are marching into this industry, which is also known as the Self-Driving Car Race1. With 5G technology be available in 2020, powered by much higher data rate and lower latency, the Internet of Things will become more popular and viable than ever, and Auto-Navigation system will definitely be a big part of it. In foreseeable future, auto-navigation car will take place on the road. As the core part of auto-navigation, the system behind is crucial to look for and study, and this is also the topic we focus in this project.

Objective

This project aim to develop a 4-legged robot being able to auto-navigate from point to point accurately and be able to carry simple tasks such as holding objects or guiding passenger to their designated place. The robot will be put on campus and serve as object transportation and also campus tour guide.

The robot is aimed to achieve a timely and good environment mapping with 360° and being able to navigate in time without collision. Fast image recognition is aimed to achieve via light weight trained image recognition model with TensorFlow. An environment mapping will be created with a radius of 10m, and cross mapping with the data return by the ultrasonic sensor and the images return by camera. The real-time images of the robot and the environment mapping will be displayed in form of a mobile app. On top of this, counter measurement on camera will be deployed for image stabilisation in order to make image processing much faster.

Moreover, human control mode will also be developed and will be available in the above-mentioned mobile app in order for human to take over the control the robot, in case of any emergency issues or other depending scenarios, which is also a common practice for all auto-navigation system. The end goal of this project is to develop a light-weight, fast and efficient auto-navigation system which is also portable to other forms like drones and wheeled-robot. The collaboration of cameras, sensors, image recognition and environment mapping engine is the core of study is this project to make the auto-navigation system efficient and widely portable.

People

Edison Lo

https://medium.com/@edisonlo