OpenFace

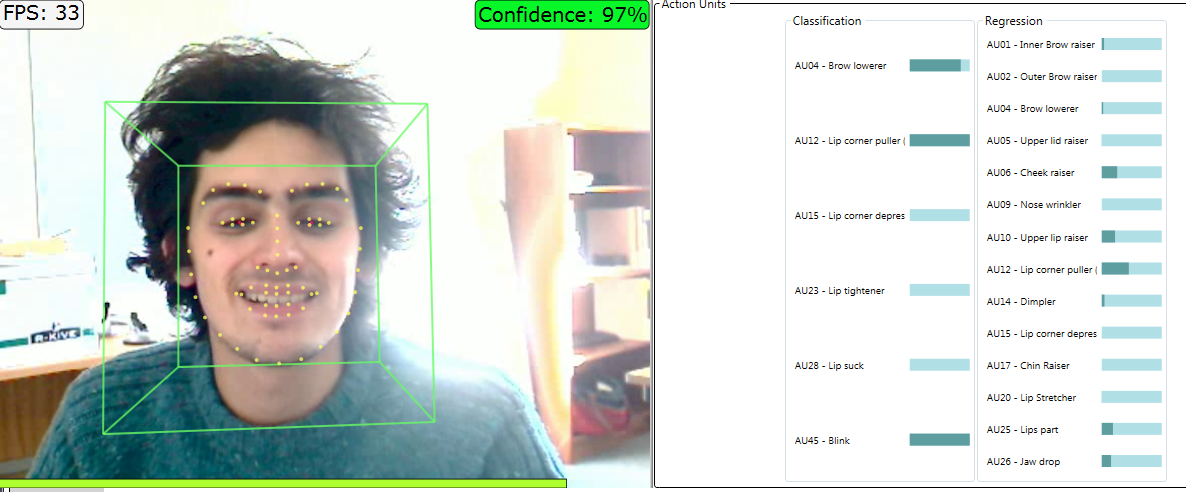

OpenFace is a popular open source toolkit for facial behavior analysis, including facial action unit detection based on the research by the team of Baltrušaitis. It is able to extract 18 kinds of facial action units in 5 discrete levels of intensity.