| Yizhou Yu | Andras Ferencz | Jitendra Malik |

In this project, we developed two key techniques necessary for editing a real scene captured with both cameras and laser range scanners. We develop automatic algorithms to segment the geometry from range images into distinct surfaces, and register texture from radiance images with the geometry. The result is an object-level representation of the scene which can be rendered with modifications to structure via traditional rendering methods.

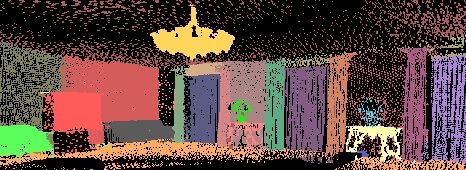

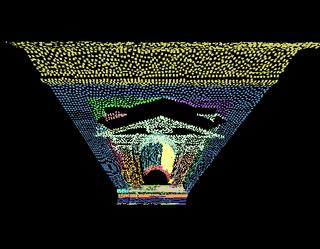

The segmentation algorithm for geometry operates directly on the point cloud from multiple registered 3D range images instead of a reconstructed mesh. It is a top-down algorithm which recursively partitions a point set into two subsets using a pairwise similarity measure based on the 3D location, normal estimation and returned laser intensity at each point. The result is a binary tree with individual surfaces as leaves.

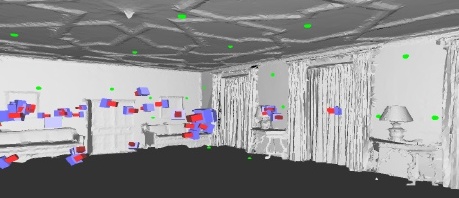

Our image registration technique can automatically find the camera poses for arbitrary position and orientation relative to the geometry. Thus we can take photographs from any location without precalibration between the scanner and the camera. We arrange some easily extractable calibration targets in the scene before capturing the data. Our algorithm performs a combinatorial search to find the correct matching between the targets detected in both radiance and range images.

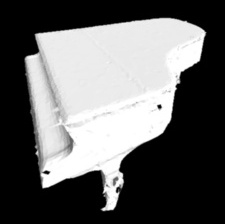

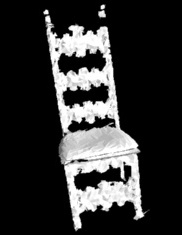

The algorithms have been applied to large-scale real data. We can reconstruct meshes and texture maps for individual objects and render them realistically. We demonstrate our ability to edit a captured scene by moving, inserting, and deleting objects.

This paper appears in IEEE Transactions on Visualization and Computer Graphics (pdf).