Announcement

- Dec 9. Please check your marks for homework (1, 2, 3 and 4) and mid-term exam. If you have any issues with the grading, please contact the tutor by Dec 16 (next Monday). Late requests will not be considered.

- Nov 14. Homework 4 is released and due Dec 5. The first question is related to epsilon-net, not to be confused with epsilon-sample.

- Nov 12. There are some typos in question 5 of homework 3, here is an update.

- Oct 10. Homework 3 is released and due Nov 14.

- Sep 12. Homework 2 is released and due Oct 10.

- Sep 3. Welcome to the class. Homework 1 is released and due Sep 12.

This class is designed for students who have a basic knowledge in

algorithms and would like to study more advanced topics in the

subject. NP-complete problems are believed to be not solvable in

polynomial time and we study how approximation algorithms could give

near optimal solutions. In particular, we will see that probability

theory gives us a very powerful tool to tackle problems that are

otherwise hard to solve. The surprising phenomenon that independent

random sources can give rise to almost certain events is known as

measure concentration, and this principle forms the basis of the

analysis of many randomized algorithms. This course is taught in the

style of a mathematics class and we put emphasis on understanding how

the various techniques work. However, we explain the subject from the

computer scientist's viewpoint - our approach is rigorous, but not

pedantic.

Prerequisites: Students should

have a basic knowledge of probability and algorithms. For example,

probability space, discrete and continuous random variables, expectation,

independence,

probability density function, normal (Gaussian) distribution.

However, we would also recall the necessary concepts as the course proceeds. Students from all faculties are welcome to take this class; please email the instructor if you need help to register the class.

Previous Class: This class was previously taught in years 2009, 2010 and 2011.

Topics Covered

- Basic Probability Theory

- Probabilistic Method

- Randomized Algorithms, Conditional Expectation

- Measure Concentration, Method of Moment Generating Function

- Chernoff Bound, Hoeffding Inequality

- Dimension Reduction (Johnson-Lindenstrauss Lemma)

- epsilon-nets, epsilon-samples, VC-dimension

- Rademacher Averages, Haussler's Theorem

- Other Advanced Topics

Textbook

There is no compulsory text, but here are some suggested

textbooks:

Assessment

- 4 homeworks (25%)

- 1 quiz (25%)

- Final examination (50%)

Late Homework Policy:

1 Day late: 50% credit, 0% credit after

If you have special reasons for handing in your homework late, please let us know in advance.

Plagiarism

You are allowed and encouraged to discuss the homework with other students and the tutors.

However, you have to write up the answers alone (i.e., you are not allowed to see the writeup

of another student) and declare the names of the people with whom you have discussed.

As defined in the University's Regulations Governing Conduct at Examinations, plagiarism is the unacknowledged use, as one's own, of work of another person,

whether or not such work has been published. Or put it simply, plagiarism is copying the work of another person without proper acknowledgement.

In case of queries on plagiarism, students are strongly advised to refer to "What is Plagiarism?".

First Attempt:

Students who admit committing plagiarism for the first time shall be warned in writing and receive a zero mark for the component concerned. For those who do not confess, the case would be referred to the Programme Director for consideration.

Subsequent Attempt:

If students commit plagiarism more than once during the course of studies, the case shall be referred to the Programme Director for consideration. The Programme Director will investigate the case and consider referring it to the University Disciplinary Committee, which may impose any of the following penalties: a published reprimand, suspension of study for a period of time, fine, or expulsion from the University.

Lecture notes will be posted as soon as they are available.

- 3 Sep. Course info, basics of probability, probabilistic method.

- 5 Sep. More on Probabilistic Method, Derandomization.

- 10 Sep. Graphs with No Short Cycles (Method of Alteration), More on Derandomization.

- 12 Sep. Set Cover.

- 17 Sep. Chernoff Bound, Measure Concentration.

- 19 Sep. More Measure Concentration: Counting DNF Satisfying Assignments, Hoeffding's Inequality.

- 24 Sep. Continue with Hoeffding's Inequality.

- 26 Sep. More Examples for Measure Concentration: (1) Impossibility Result to Achieve Multiplicative Error for Blackbox Sampling, (2) Pollard's Kangaroo Algorithm.

- 1 Oct. No class on National Day.

- 3 Oct. Continue with Pollard's Kangaroo Algorithm.

- 8 Oct. Johnson-Lindenstrauss Lemma: Dimension Reduction

- 10 Oct. Continue with Johnson-Lindenstrauss Lemma

- 22 Oct. Mid-term Exam.

- 24 Oct. Mid-term Exam follow-up.

- 29 Oct. epsilon-Nets, VC-Dimension

- 31 Oct. Continue with epsilon-Nets, VC-Dimension

- 5 Nov. epsilon-Nets, epsilon-Samples, VC-Dimension (Part 2)

- 7 Nov. Continue with epsilon-Nets, epsilon-Samples, VC-Dimension

- 12 Nov. epsilon-Samples and Rademacher Averages

- 14 Nov. Continue with epsilon-Samples and Rademacher Averages

- 19 Nov. Dudley's Integral

- 21 Nov. Haussler's Theorem

- 26 Nov. Continue with Haussler's Theorem

- 28 Nov. Review

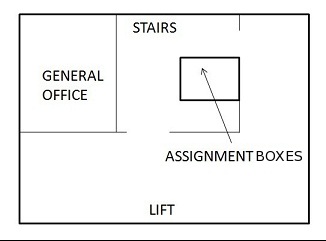

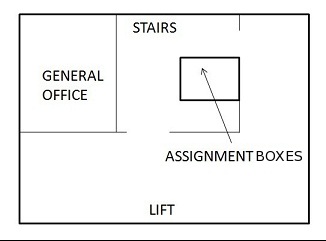

Please put the solution to your homework in the assignment box A7 at the corner of the 3rd-floor lobby in Chow Yei Ching Building on or before the due date at 7pm.

If you have special reasons for handing in your homework late, please let us know in advance.

- Homework 1, due 12 Sep.

- Homework 2, due 10 Oct.

- Homework 3, due 14 Nov.

- Homework 4, due 5 Dec.